On November 15th 2025, I brought my brand-new project Natural Machines 2.0 to Carnegie Hall. The performance featured The Knights orchestra and special guests Becca Stevens and Miguel Zenon. The house was full; it was the thrill of a lifetime. I’m particularly grateful to the many friends who came, some from a great distance. Below are my program notes for the concert, along with a technical note diving deeper into how the project functions behind the scenes.

In the Artist’s Own Words

For as long as I can remember, I’ve been fascinated by the idea of free improvisation: that it’s possible to create an entire piece of music, never-before heard, in the moment, out of nothing. Growing up as a jazz pianist, I learned to improvise over pre-determined harmonic progressions, but I yearned for a greater freedom: not only to improvise within a given structure, but to improvise the structure itself. As an adult, I’ve slowly learned the discipline necessary to doing this in a way that feels meaningful — alone at the piano (on my recent album Inventions / Reinventions, for example) or with a big-eared collaborator (Lee Konitz, memorably). But it’s only recently that I’ve begun to wonder: what could free improvisation look like with an orchestra?

In 2010, I composed my first piano concerto, and left substantial room for improvisation in the piano part. The orchestra parts, however, were written out in the conventional way. At the same time, I began to bring my childhood love of computer programming into my music. I wrote algorithms — systems of musical rules — which could improvise with me in real time, expressing themselves acoustically through the Yamaha Disklavier player piano. This led to my 2019 album Natural Machines, where I explore the intersection of natural (intuitive, emotional) and mechanical (logical, rule-based) processes in music, doing free improvisation with an algorithm as creative partner.

It’s worth remembering that many of the greatest musical minds were just as concerned with the rules that structured their music as with the emotion that made it soar. This is certainly true of Bach, and also of John Coltrane. I believe it’s when these two great forces meet — the algorithmic and the spiritual — that art is at its strongest.

In 2022, the Eugene Symphony approached me about writing a new piano concerto. I imagined composing in the conventional way at first. Then the symphony’s conductor, Francesco Lecce-Chong, suggested I incorporate elements from Natural Machines, in particular the live visual representations of the music I’d created.

Over the last three years, this idea slowly percolated in me — of striking some balance between traditional notation and computer-augmented free improvisation. Then, in mid 2024, on the tail end of two large conventional composition projects, I suddenly felt like doing something radical: what if the entire orchestra, along with myself as the soloist, could enter the stage not knowing what was going to happen? What if we created the music together, in the moment? Wouldn’t that be exciting?

I devised a way to send musical notation in real-time to each member of the orchestra, on mobile devices they could easily set on their music stands. I started to think about the piano somewhat like an organ, with the ability to pull organ stops (or their digital equivalent on my iPad) to select different collections of sounds. I designed and built a system of foot pedals that enabled me to use my feet as much as my hands. I’ve personally labored over every line of code — from the ones that create the network of devices to those responsible for the real-time visuals — just as much as I’ve labored in the past over the individual notes of a composition.

The difference here, of course, is that every time this “piece” — or this process for a piece — is performed, it will sound different. Yet, there is a theme that will carry through the evening: the notion of harmony, in its broadest sense, from the delicious interactions of sound frequencies to the relative motions of orbiting planets, and perhaps most of all, to the hope that we — along with the orchestra, taken as a microcosm for society — may live in spontaneous, harmonious balance with one another.

Technical note

By character, I’ve always liked to approach problems from the ground up. There’s something about being present in every aspect of how something works, from the smallest details to the overall structure, that feels right to me. For Natural Machines 2.0, I’ve taken this approach as far as I ever have, building my own gear, custom code libraries, and even learning to create my own integrated circuits.

Thankfully, I didn’t have to create the instrument at the heart of it all. I’m performing on a Yamaha Disklavier CFX, a real acoustic piano that communicates every key and pedal movement to my computer via MIDI, and which has the magical ability to play by itself. The Disklavier allows me to play naturally while the computer listens, analyzing and responding to my playing with both visuals and sound. In Natural Machines 1.0, the computer expressed itself by playing the same piano I play; in 2.0, it can also play the orchestra.

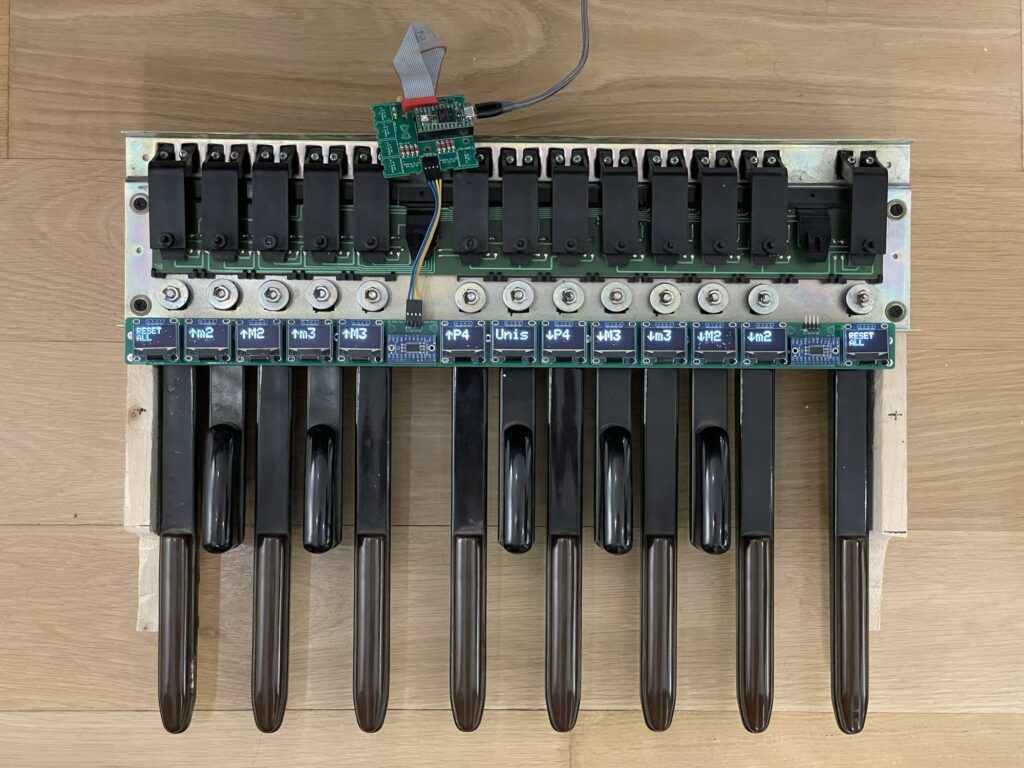

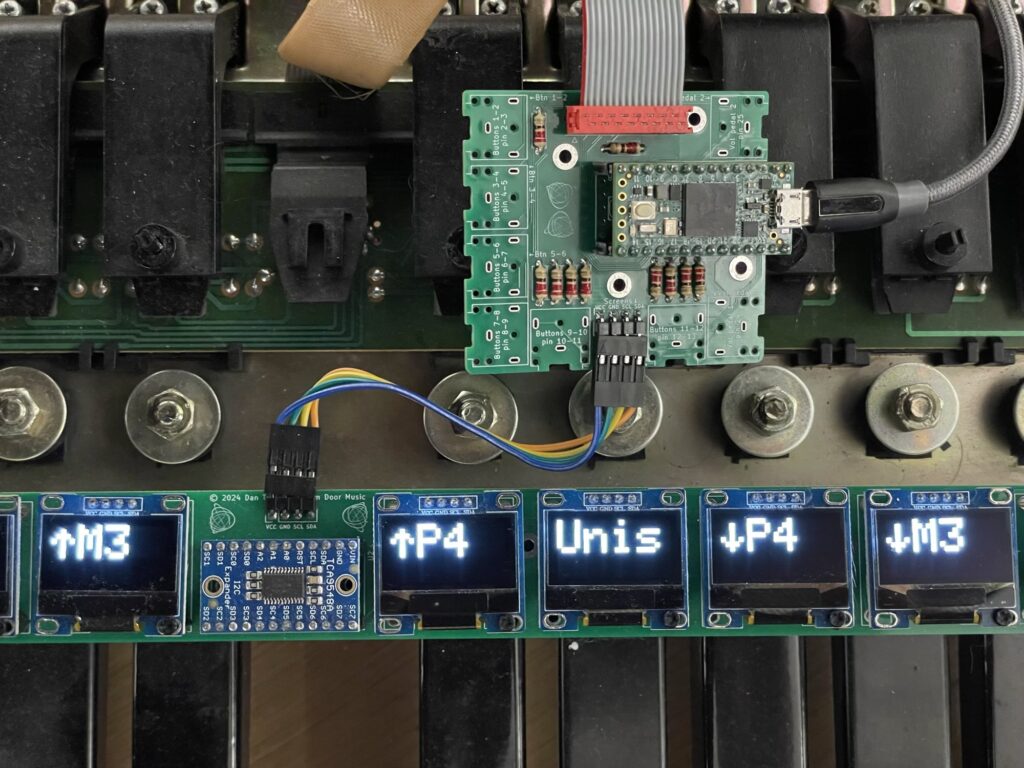

To control the many layers of the system while performing, I built several interfaces. Beneath the piano keyboard, to the right, sits an adapted organ pedalboard with MIDI output. This pedalboard doesn’t only play notes: each pedal has a small display above it that shows its current function, for example “↑ m3” if that pedal transposes a musical voice up a minor third. The functions of the pedals can change depending on context, and the displays update dynamically as the computer sends them new instructions.

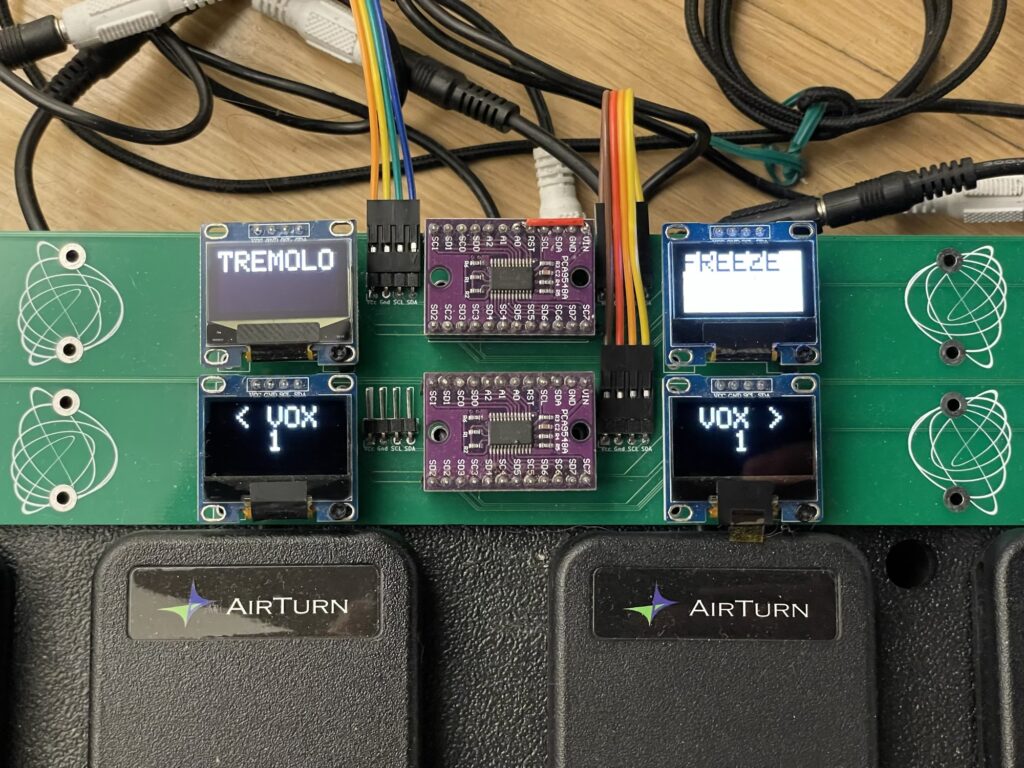

On the floor to my left sits an array of twelve pedals, each with its own display and all connected to a microcontroller that communicates pedal presses to the computer via MIDI. These pedals can trigger all kinds of real-time transformations: switching instrument assignments, changing articulations, freezing loops: anything you can imagine. The device also supports expression pedals that continuously transmit data, allowing me to shape parameters fluidly with my feet while keeping both hands on the piano. I designed and had manufactured the integrated circuits in green, and couldn’t resist decorating them with stencils of my TriadSculptures.

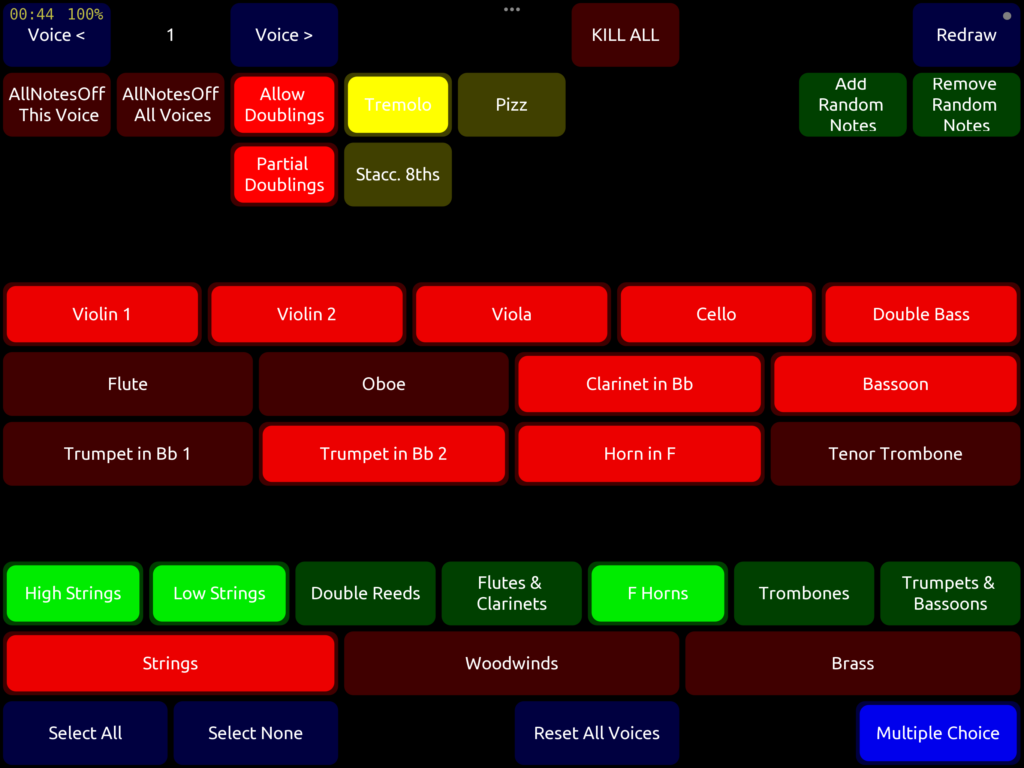

Two iPads complete the control setup. The first functions as an instrument selector, akin to the stops used by organists. At any given moment, the music I play or which my algorithms generate can be routed to any of eight “voices” — conceptual layers within the music — and each voice can be assigned to one or more orchestral instruments. The iPad lets me choose which instruments are active in the current voice, and its layout can change when the musical algorithm changes to offer context-specific controls.

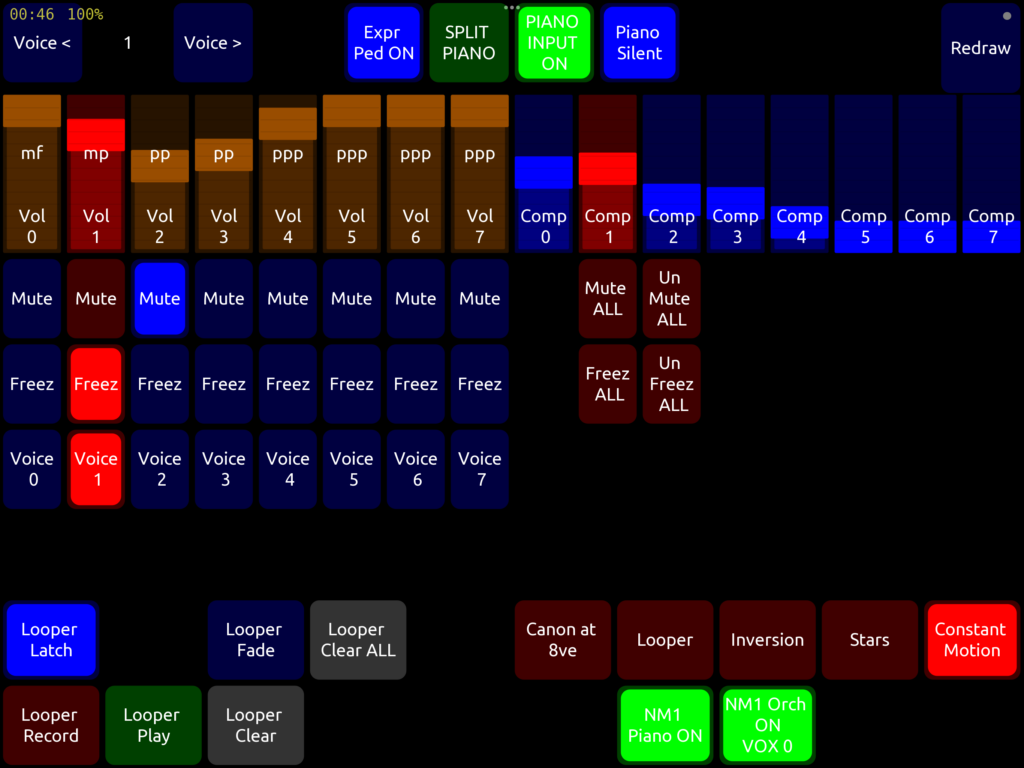

The second iPad lets me shape dynamics and other performance parameters in real time. Here I can limit or expand the volume range of each voice, trigger or stop loops, and split the piano so that only a portion of the keyboard feeds data to the computer. This iPad also lets me choose the current visual mode, altering how the music is represented on the projection screen.

What to do with all this input? At the center of the system is a laptop running three programs. The first, written in SuperCollider, acts as the central brain of the project. It receives data from all the input devices — the Disklavier, the pedalboards, the iPads, microphones — and processes that information to generate musical output, organized into independent voices. Each voice carries notes, dynamics, and articulations, as well as its own selection of orchestral instruments. This musical data gets sent out from SuperCollider to be represented and played.

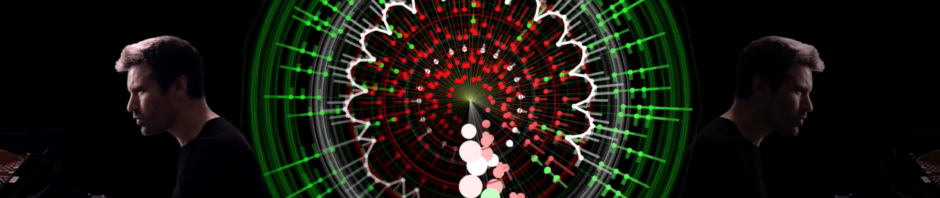

The second program, written in Processing, translates the musical data into real-time visuals, to be projected on a screen behind the musicians. It receives information from SuperCollider via the OSC protocol, and displays the notes played by the orchestra and myself, with each voice represented visually in distinct and expressive ways. The projection system must be low-latency so that the imagery, which is so intimately linked to the notes being played, feels organically connected to the sound.

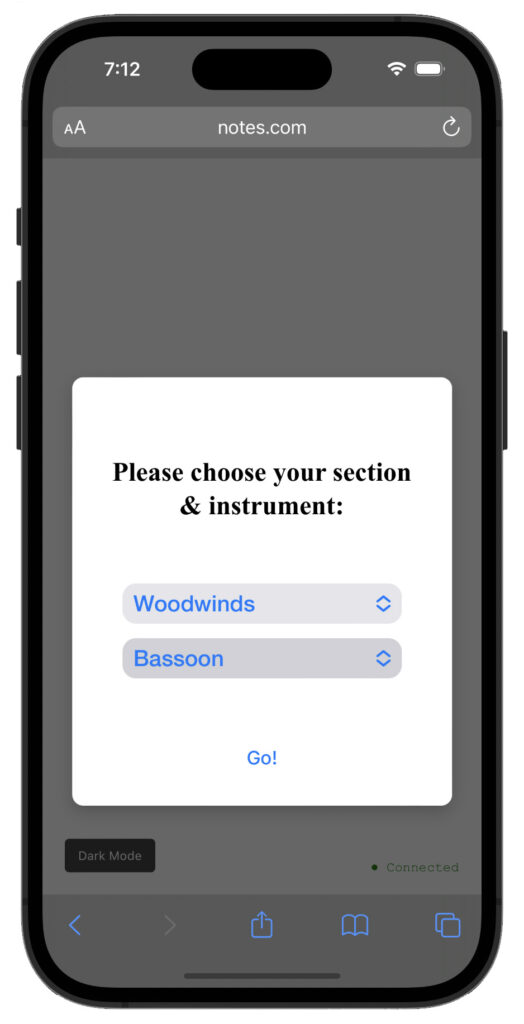

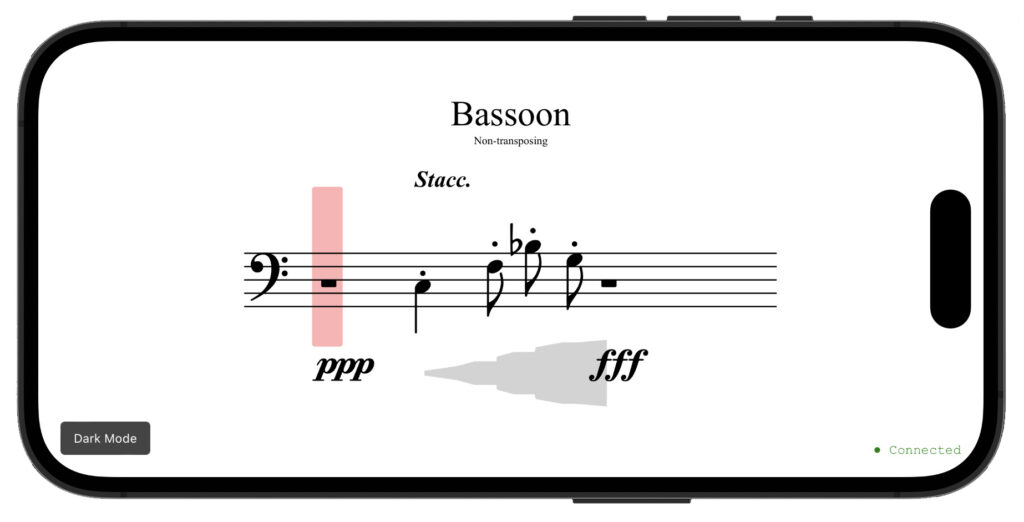

The third program, written in JavaScript, serves a web interface to each orchestral musician over a closed network, created with a specialized Wi-Fi router of the kind used by hotels and airports. Every player connects their phone or tablet to the network, opens a browser, and visits a local website. There, they select their instrument, and a notation display appears. The page shows a traditional five-line staff, automatically switching clefs for multi-clef instruments like cello or bassoon, and applying appropriate transpositions for instruments like French horn or clarinet. When I send commands to the JavaScript program from SuperCollider, notes, dynamics, and articulations specific to this instrument appear on the staff for the musician to play.

In this way, every musician receives live notation generated by what I’m playing at the piano and the algorithms I’ve designed, while the audience sees a parallel visual interpretation projected above the stage.

It’s all a genuinely wild experiment — the fruit of some crazy dream. The notion of being able to improvise with an orchestra seemed irresistible to me, and I couldn’t exactly tell you why. I simply felt compelled to venture down this road, to see what was possible. I thank you, and the musicians with me on stage tonight, for coming along for the ride.